|

I am a research engineer at Meta. I obtained my CS PhD at Hong Kong University of Science and Technology (HKUST), where I worked on efficient deep learning. I was a member of Vision and System Design Lab , advised by Prof. Tim Kwang-Ting CHENG. I work closely with Zechun Liu from Meta Reality Lab. I worked as a research intern at Snap Research Creative Vision Group, supervised by Jian Ren and Anil Kag. I have also conducted a research internship at Microsoft Research Asia (MSRA). CV / Google Scholar / Twitter (X) / Zhihu / Github / Openreview |

|

|

[2025-10] Join Meta Reality Lab as a research engineer [2025-02] One paper (SnapGen) are accepted by CVPR 2025 [2024-09] Two papers (CoT-Influx and RoLoRA) are accepted by EMNLP 2024 [2024-08] Two papers (ACS-QAT and Quantization Variation) are accepted by TMLR [2024-07] Start my intership at Snap Research Santa Monica [2023-09] One paper (LLM-FP4) is accepted by EMNLP 2023 [2023-05] Start my internship at Microsoft Research Asia (MSRA) [2022-05] One paper (SDQ) is accepted by ICML 2022 [2021-01] One paper (TIN) is accepted by TPAMI [2020-08] Start my PhD at Hong Kong University of Science and Technology (HKUST) [2020-06] Graduate from Shanghai Jiao Tong University (SJTU) [2020-03] One paper (PaStaNet) is accepted by CVPR 2020 [2019-06] Start my research internship at University of California, Los Angeles (UCLA) [2019-03] One paper (TIN) is accepted by CVPR 2019 |

|

My research interests lie in the general area of artificial intelligence, particularly in efficient large-scale models (LLMs, Diffusion Models, etc.) and human-centric computer vision. More concretely, My research interests focus on quantization, algorithm-hardware co-design, human-object interaction, and healthcare. |

Efficient AI Algorithm | |

|

Jierun Chen*, Dongting Hu*, Xijie Huang*, Huseyin Coskun, Arpit Sahni, Aarush Gupta, Anujraaj Goyal, Dishani Lahiri, Rajesh Singh, Yerlan Idelbayev, Junli Cao, Yanyu Li, Kwang-Ting Cheng, Mingming Gong, S.-H. Chan, Sergey Tulyakov, Anil Kag, Yanwu xu, Jian Ren (* indicates Equal Contribution) CVPR 2025 (Spotlight) Paper/Project Page/Snap Newsroom/TechCrunch An extremely small and fast T2I model that generates high-resolution and high-quality images on mobile platforms. Our model, for the first time, demonstrates the generation of 1024x1024 px images on a mobile device in 1.2-2.3 seconds. |

|

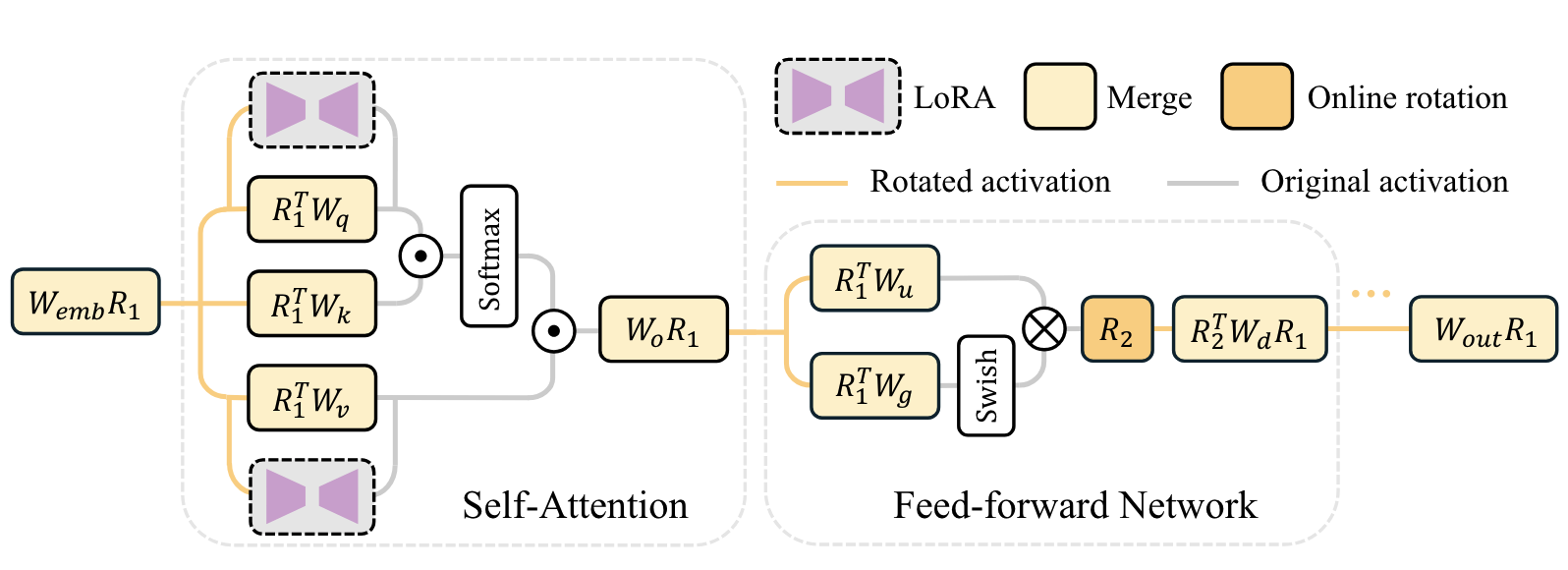

Xijie Huang, Zechun Liu, Shih-Yang Liu, Kwang-Ting Cheng EMNLP 2024 Findings Paper/Code/HF Repo A LoRA-based scheme for effective weight-activation quantization. RoLoRA utilizes rotation for outlier elimination and proposes rotation-aware fine-tuning to preserve the outlier-free characteristics in rotated LLMs. |

|

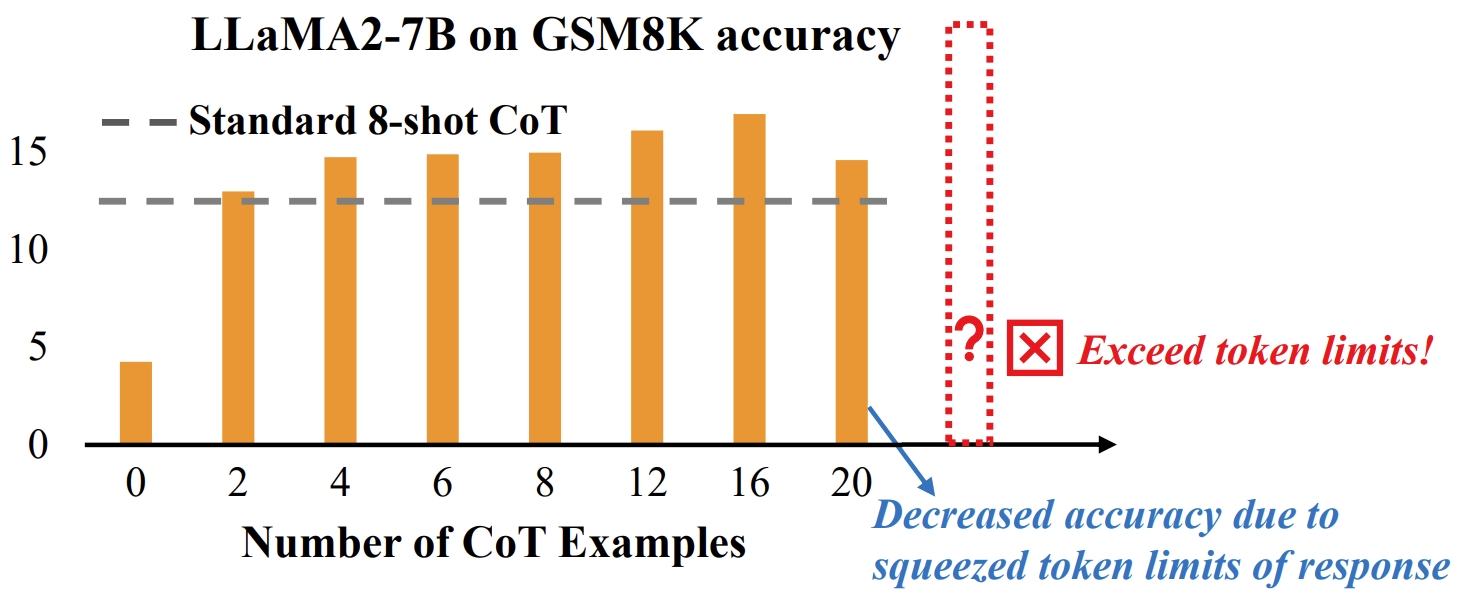

Xijie Huang, Li Lyna Zhang, Kwang-Ting Cheng, Fan Yang, Mao Yang EMNLP 2024 Main Paper/Code A novel approach to push the boundaries of few-shot CoT learning to improve LLM math reasoning capabilities. We propose a coarse-to-fine pruner as a plug-and-play module for LLMs, which first identifies crucial CoT examples from a large batch and then further prunes unimportant tokens. |

|

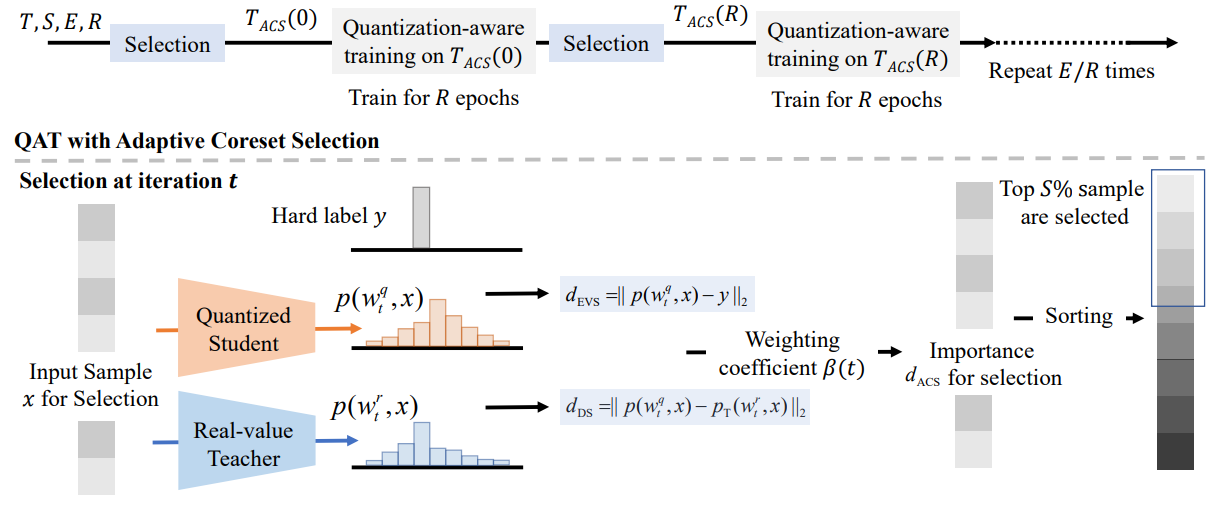

Xijie Huang, Zechun Liu, Shih-Yang Liu, Kwang-Ting Cheng Transactions on Machine Learning Research (TMLR) 2024 Paper/Code/OpenReview A new angle through the coreset selection to improve the training efficiency of quantization-aware training. Our method can achieve an accuracy of 68.39% of 4-bit quantized ResNet-18 on the ImageNet-1K dataset with only a 10% subset, which has an absolute gain of 4.24% compared to the previous SoTA. |

|

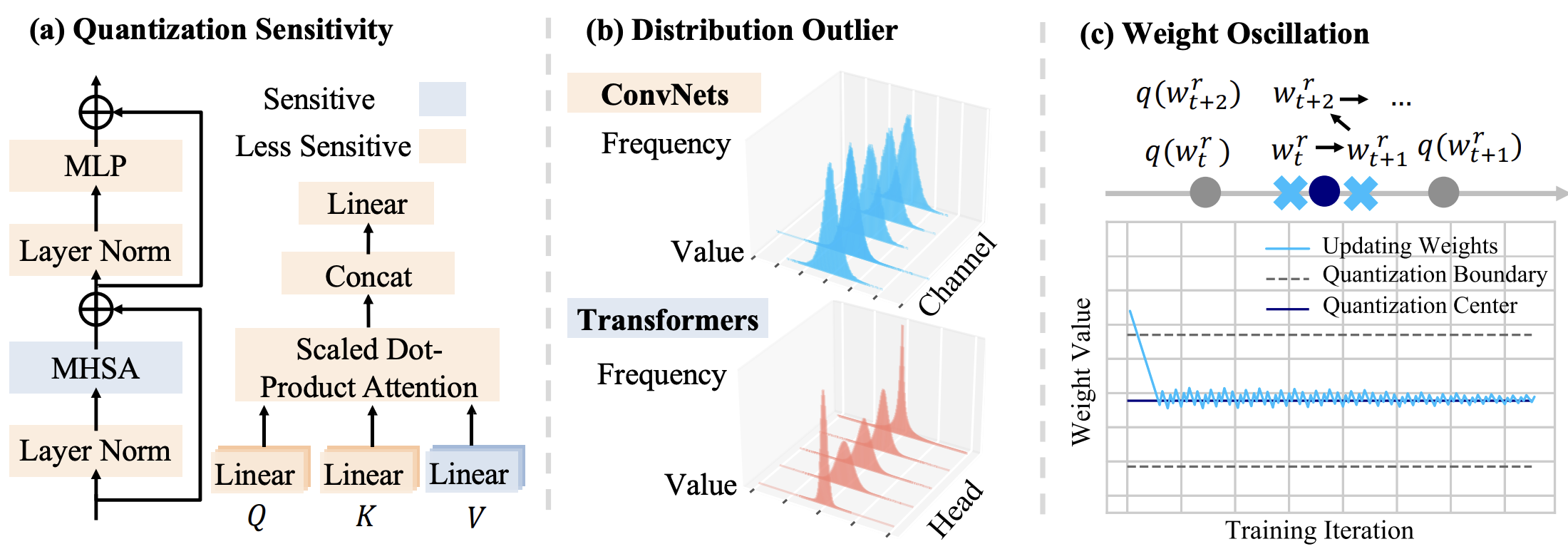

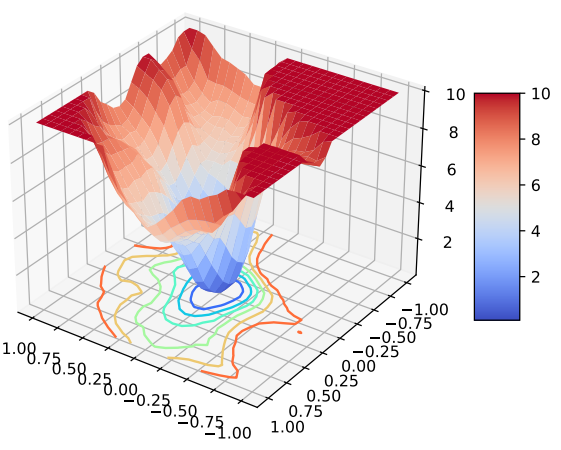

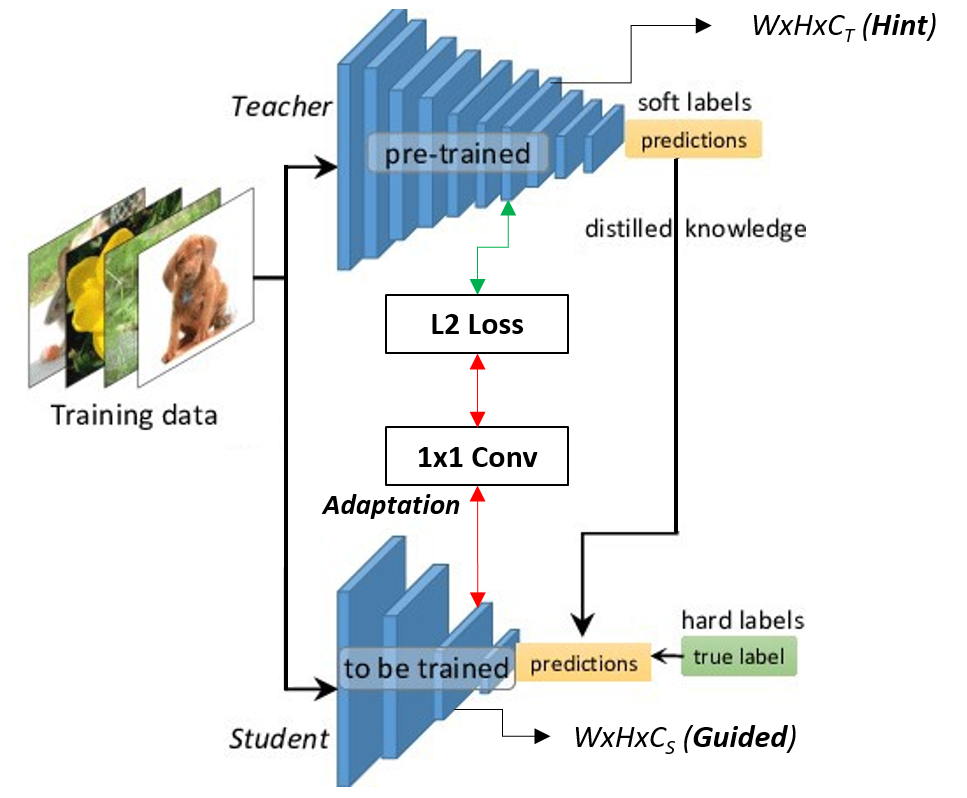

Xijie Huang, Zhiqiang Shen, Pingcheng Dong, Kwang-Ting Cheng Transactions on Machine Learning Research (TMLR) 2024 Paper/Code/OpenReview An analysis of the underlying difficulty of transformer quantization in the view of variation. A multi-crop knowledge distillation-based quantization method is proposed. |

|

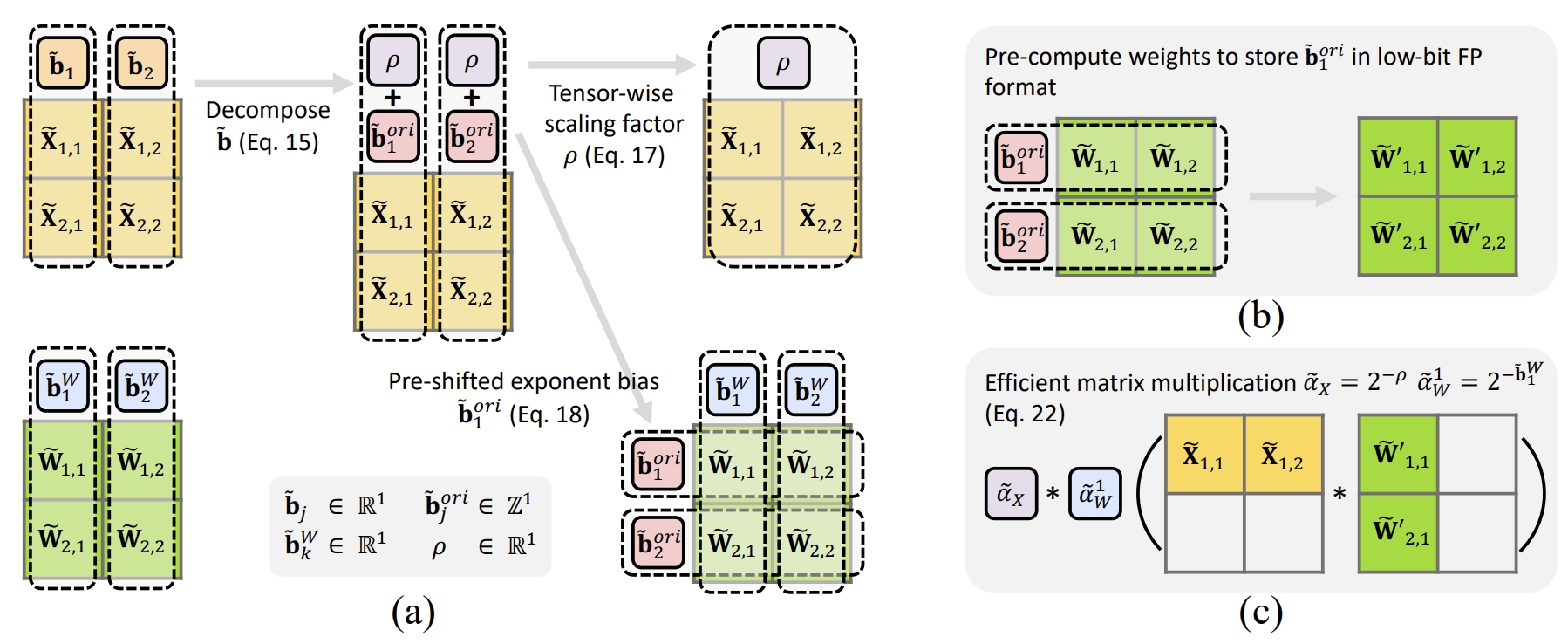

Shih-Yang Liu, Zechun Liu, Xijie Huang, Pingcheng Dong, Kwang-Ting Cheng EMNLP 2023 Main Paper/Code A per-channel activation quantization scheme with additional scaling factors that can be reparameterized as exponential biases of weights, incurring a negligible cost. Our method can quantize both weights and activations in the Bert model to only 4-bit and achieves an average GLUE score of 80.07, which is only 3.66 lower than the full-precision model, significantly outperforming the previous state-of-the-art method that had a gap of 11.48. |

|

Xijie Huang, Zhiqiang Shen, Shichao Li, Zechun Liu, Xianghong Hu, Jeffry Wicaksana, Eric Xing, Kwang-Ting Cheng ICML 2022 (Spotlight) Paper / Talk / Slides A novel stochastic quantization framework to learn the optimal mixed precision quantization strategy. |

Efficient AI Hardware | |

|

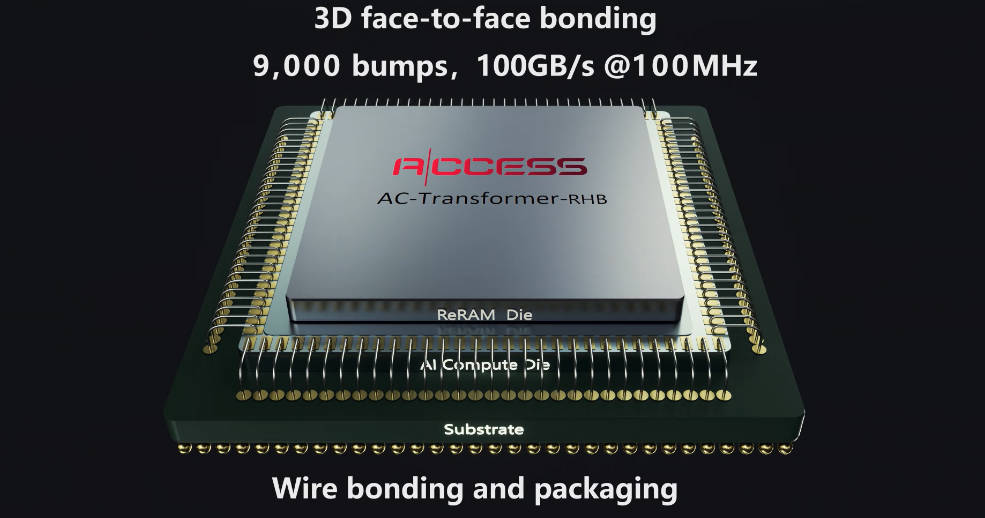

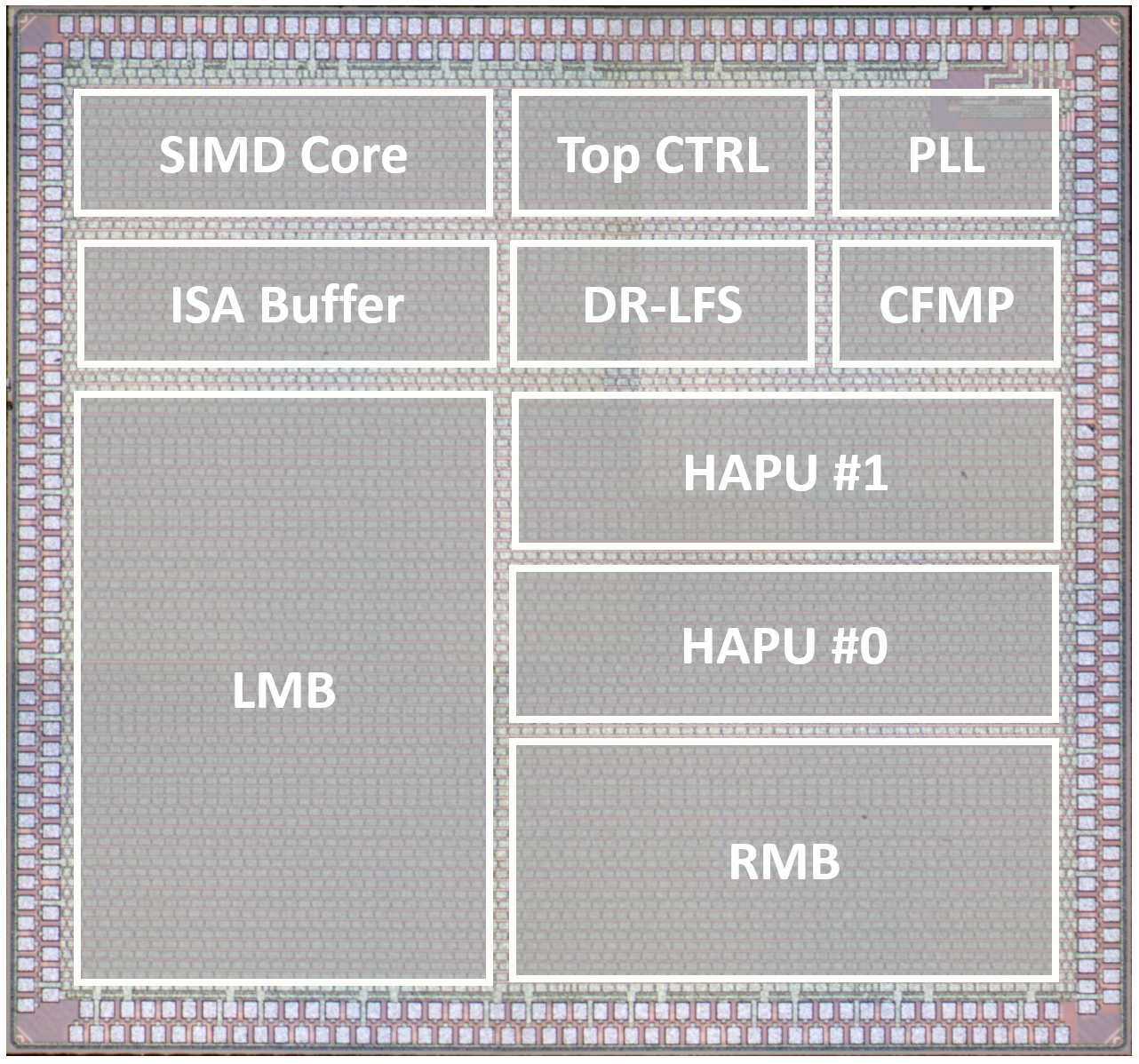

Pingcheng Dong, Yonghao Tan, Xuejiao Liu, Peng Luo, Yu Liu, Di Pang, Songchen Ma, Xijie Huang, Shih-Yang Liu, Dong Zhang, Luhong Liang, Chi-Ying Tsui, Fengbin Tu, Liang Zhao, Kwang-Ting Cheng IEEE International Solid-State Circuits Conference (ISSCC) 2026. A ReRAM-on-Logic stacked LLM accelerator featuring outlier-free quantization, block-clustered weight compression, and adaptive parallel-speculative-decoding, achieving 14.08-to-135.69 Token/s throughput. |

|

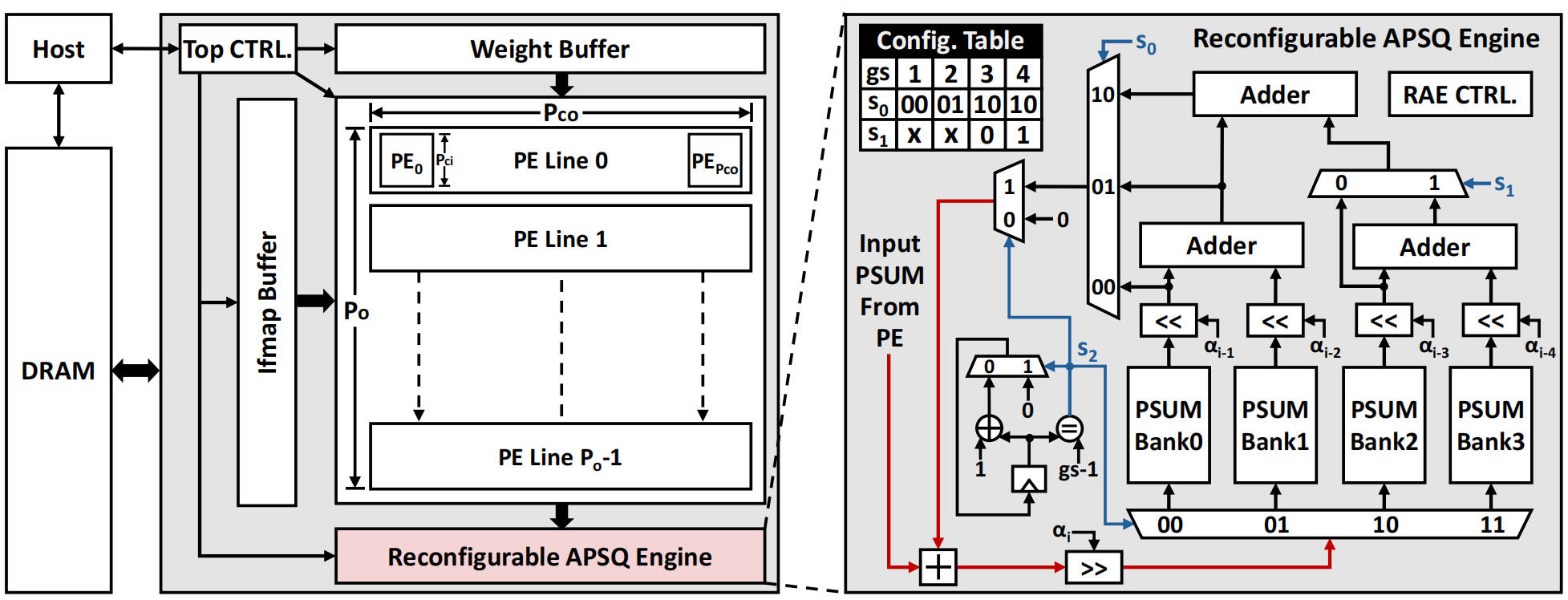

Yonghao Tan*, Pingcheng Dong*, Yongkun Wu, Yu Liu, Xuejiao Liu, Peng Luo, Shih-Yang Liu, Xijie Huang, Dong Zhang, Luhong Liang, Kwang-Ting Cheng (* indicates Equal Contribution) ACM/IEEE Design Automation Conference (DAC) 2025 Paper An algorithm-hardware co-design approach that quantizes the additive partial sums in MAC arrays to reduce accumulator bit-width and improve hardware efficiency without sacrificing model accuracy. |

|

Pingcheng Dong, Yonghao Tan, Xuejiao Liu, Peng Luo, Yu Liu, Luhong Liang, Yitong Zhou, Di Pang, Manto Yung, Dong Zhang, Xijie Huang, Shih-Yang Liu, Yongkun Wu, Fengshi Tian, Chi-Ying Tsui, Fengbin Tu, Kwang-Ting Cheng IEEE International Solid-State Circuits Conference (ISSCC) 2025. Paper A hybrid attention mechanism coupled with a KV-weight-reused scheduler to fuse the layers between convolution and attention. Additionally, we introduce a cascaded feature map pruning strategy to achieve unified convolution-attention pruning. |

|

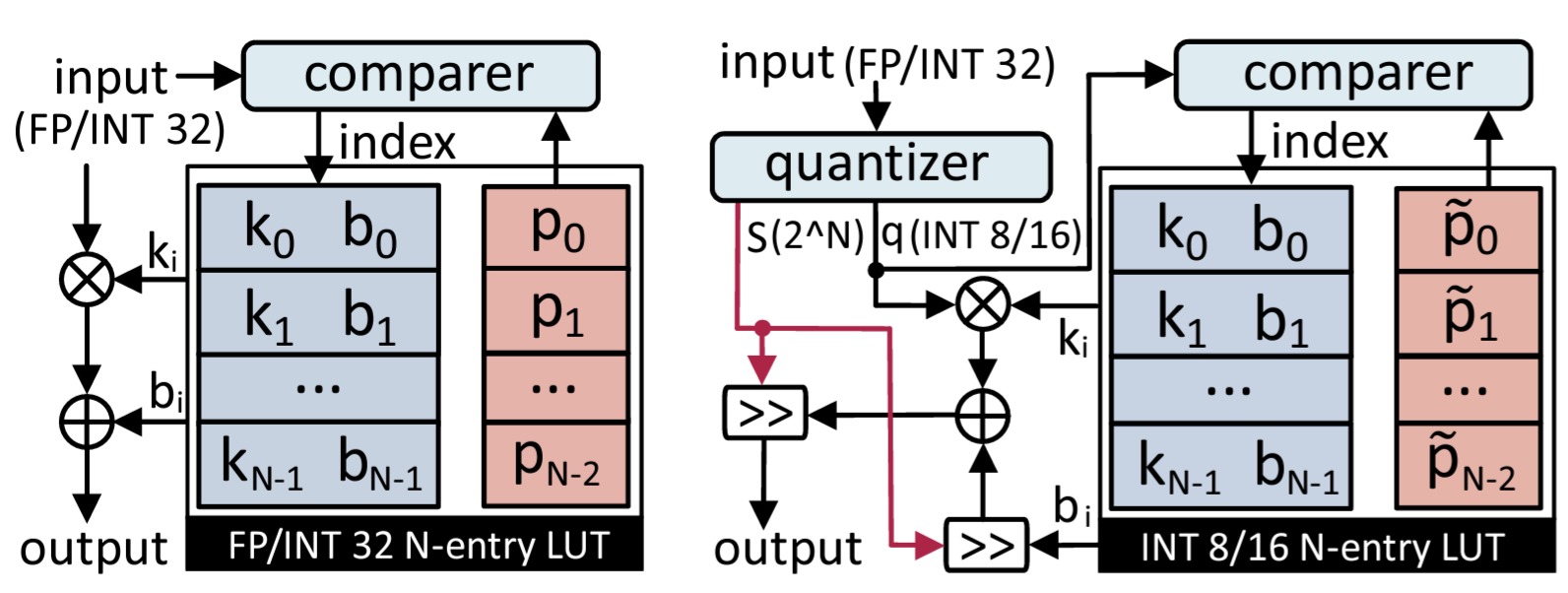

Pingcheng Dong, Yonghao Tan, Dong Zhang, Tianwei Ni, Xuejiao Liu, Yu Liu, Peng Luo, Luhong Liang, Shih-Yang Liu, Xijie Huang, Huaiyu Zhu, Yun Pan, Fengwei An, Kwang-Ting Cheng ACM/IEEE Design Automation Conference (DAC) 2024 Paper/Code A genetic LUT-Approximation algorithm namely GQA-LUT that can automatically determine the parameters with quantization awareness. The results demonstrate that GQA-LUT achieves negligible degradation on the challenging semantic segmentation task for both vanilla and linear Transformer models. |

|

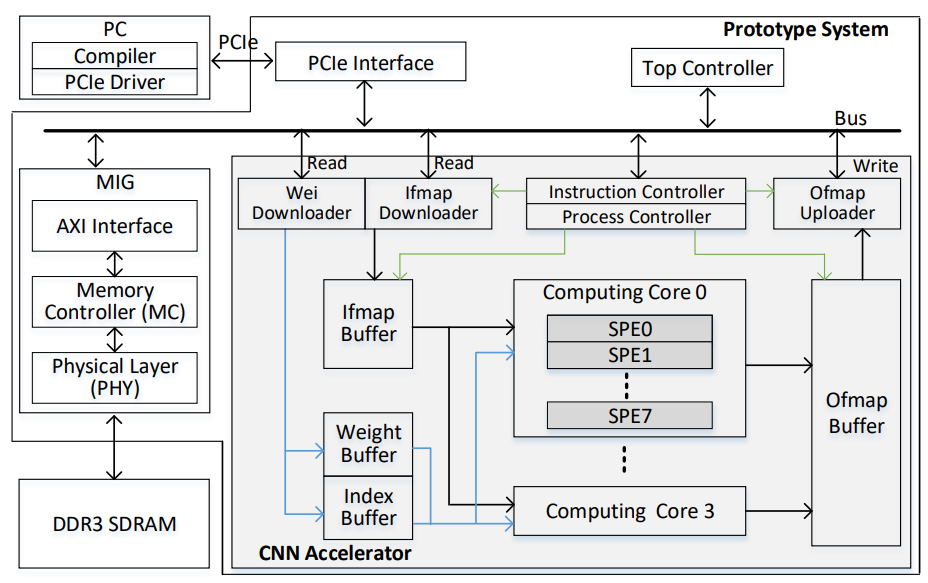

Xianghong Hu, Xuejiao Liu, Yu Liu, Haowei Zhang, Xijie Huang, Xihao Guan, Luhong Liang, Chi Ying Tsui, Xiaomeng Xiong, Kwang-Ting Cheng IEEE Transactions on Circuits and Systems II: Express Briefs (TCAS-II) 2023 Paper A tiny accelerator for mixed-bit sparse CNNs featuring a novel scheme of single vector-based compressed sparse filter (CSF) method and single input multiple output scratch pad (SIMO SPad) to effectively compress weight and fetch the needed input activation. |

Human-centric Vision | |

|

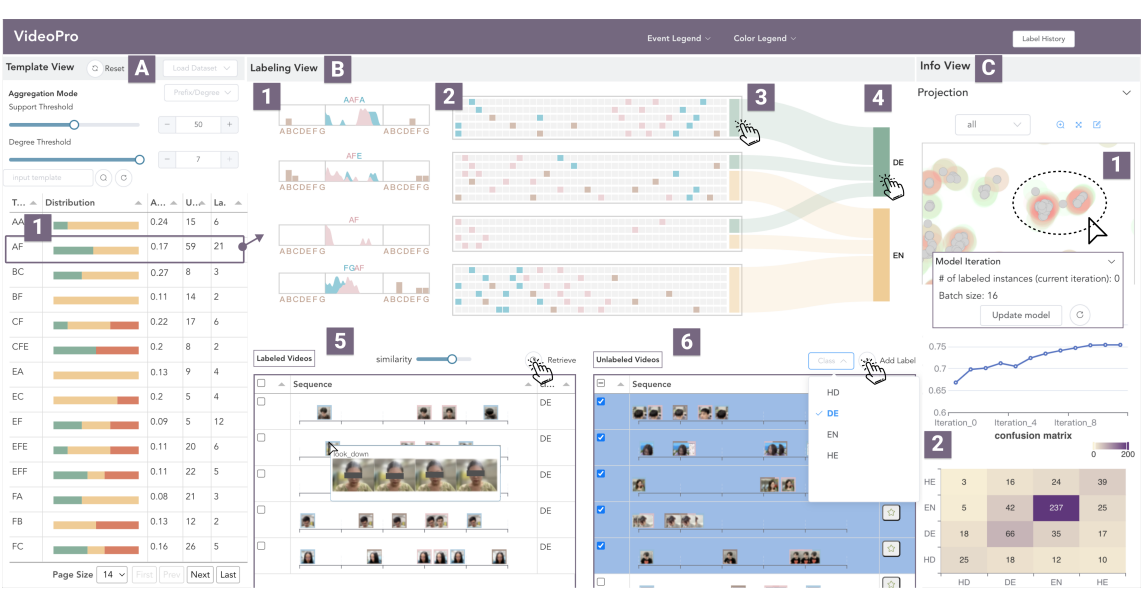

Jianben He, Xingbo Wang, Kam Kwai Wong, Xijie Huang, Changjian Chen, Zixin Chen, Fengjie Wang, Min Zhu, Huamin Qu IEEE Transactions on Visualization and Computer Graphics (VIS) 2023 Paper A visual analytics approach to support flexible and scalable video data programming for model steering with reduced human effort. |

|

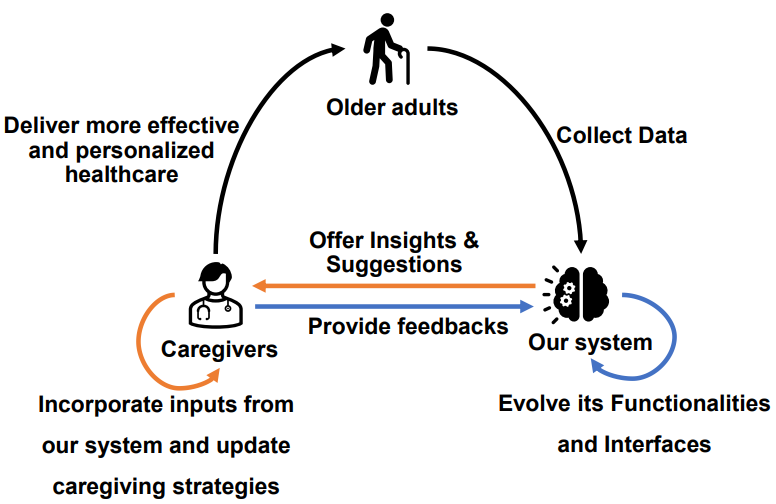

Xijie Huang, Jeffry Wicaksana, Shichao Li, Kwang-Ting Cheng AAAI Workshop on Health Intelligence, 2022 Paper An automatic, vision-based system for monitoring and analyzing the physical and mental well-being of senior citizens. |

|

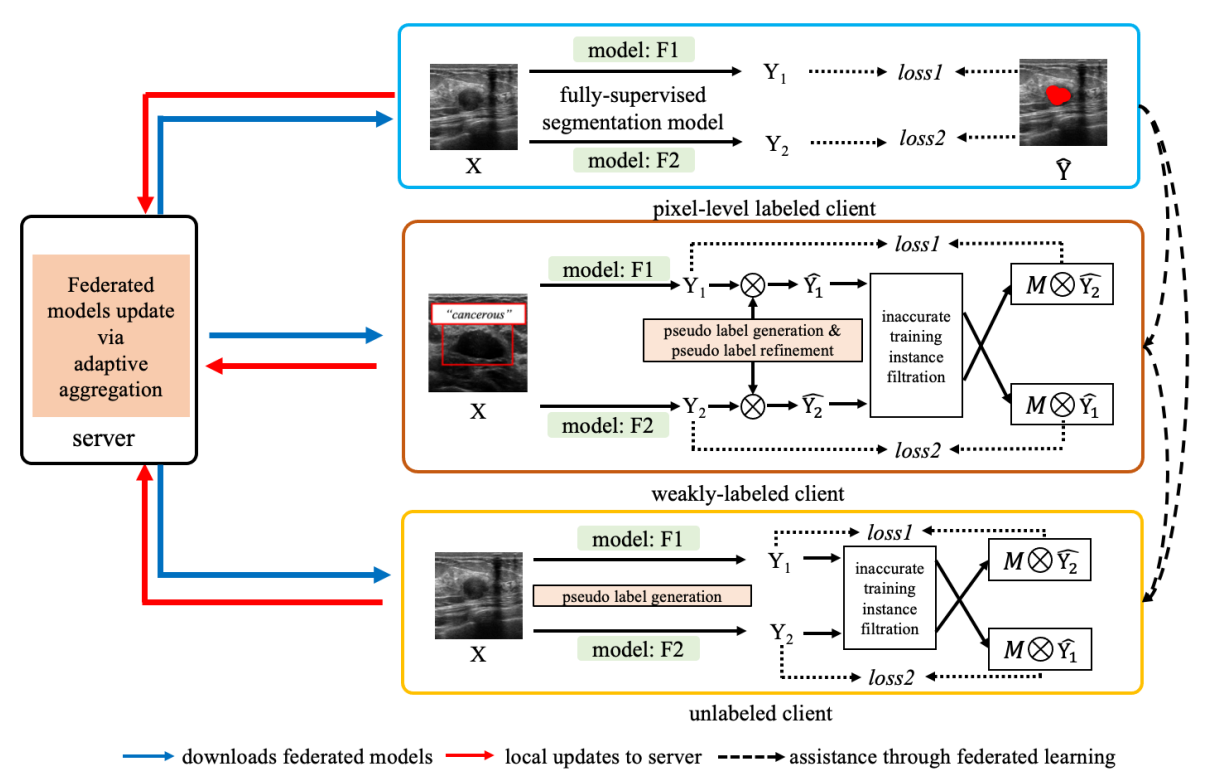

Jeffry Wicaksana, Zengqiang Yan, Dong Zhang, Xijie Huang, Huimin Wu, Xin Yang, Kwang-Ting Cheng IEEE Transactions on Medical Imaging (TMI), 2022 Paper/Code A label-agnostic unified federated learning framework, named FedMix, for medical image segmentation based on mixed image labels. In FedMix, each client updates the federated model by integrating and effectively making use of all available labeled data ranging from strong pixel-level labels, weak bounding box labels, to weakest image-level class labels. |

|

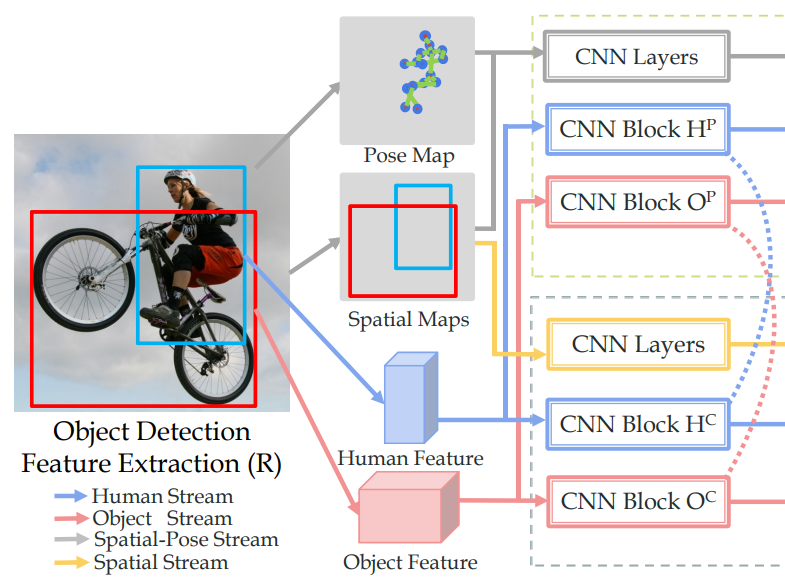

Yong-Lu Li, Siyuan Zhou, Xijie Huang, Liang Xu, Ze Ma, Hao-Shu Fang, Cewu Lu TPAMI 2021/CVPR 2019 Paper (TPAMI version) / Paper (CVPR version) / Code A transferable knowledge learner and can be cooperated with any HOI detection models to achieve desirable results. TIN outperforms state-of-the-art HOI detection results by a great margin, verifying its efficacy and flexibility. |

|

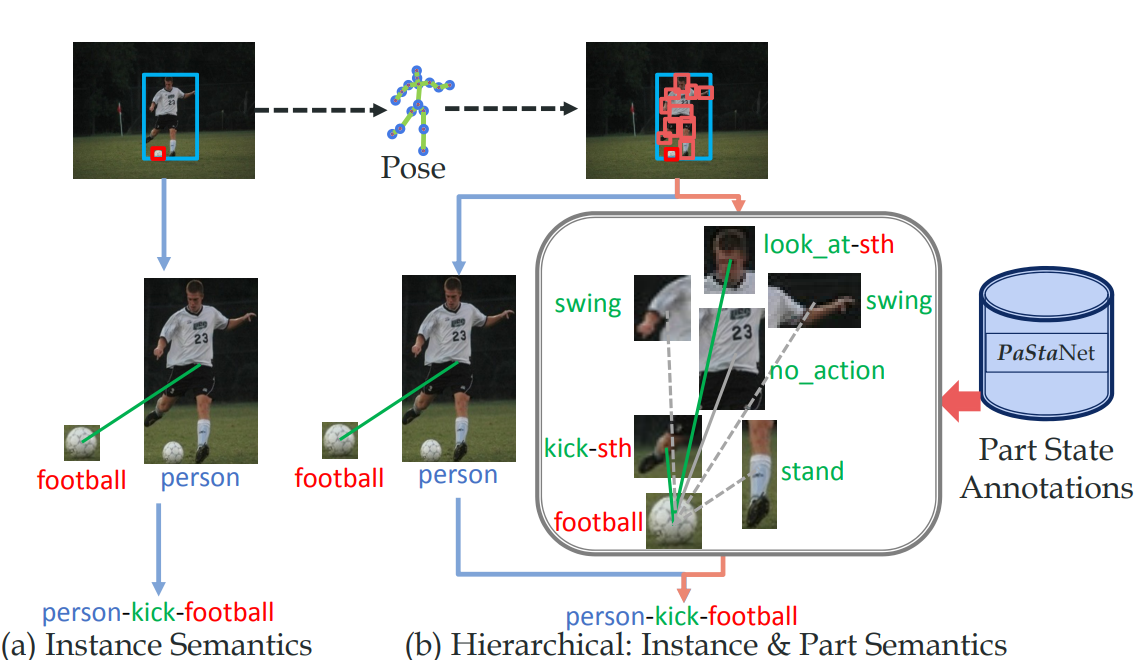

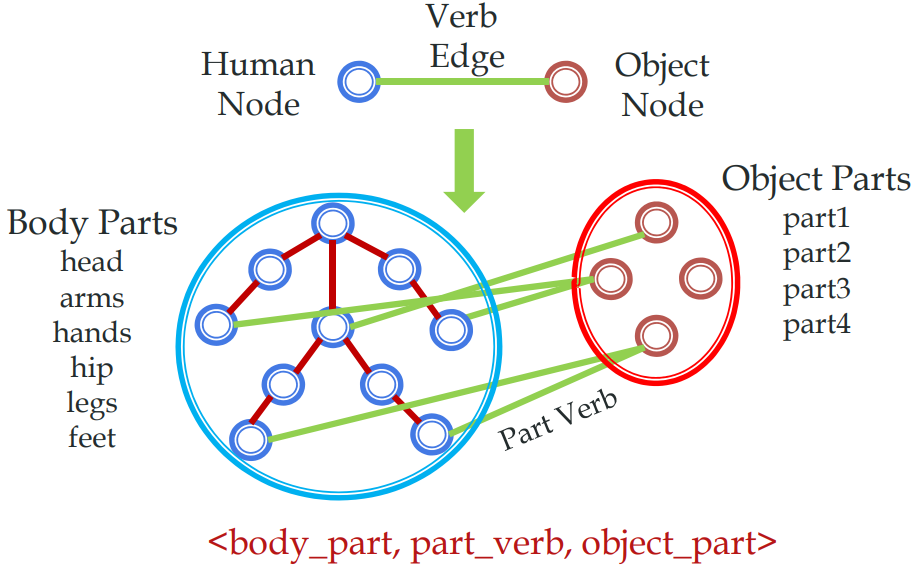

Yong-Lu Li, Liang Xu, Xinpeng Liu, Xijie Huang, Mingyang Chen, Shiyi Wang, Hao-Shu Fang, Cewu Lu CVPR 2020 Paper/ Code A large-scale knowledge base PaStaNet, which contains 7M+ PaSta annotations. And two corresponding models are proposed: first, we design a model named Activity2Vec to extract PaSta features, which aim to be general representations for various activities. |

|

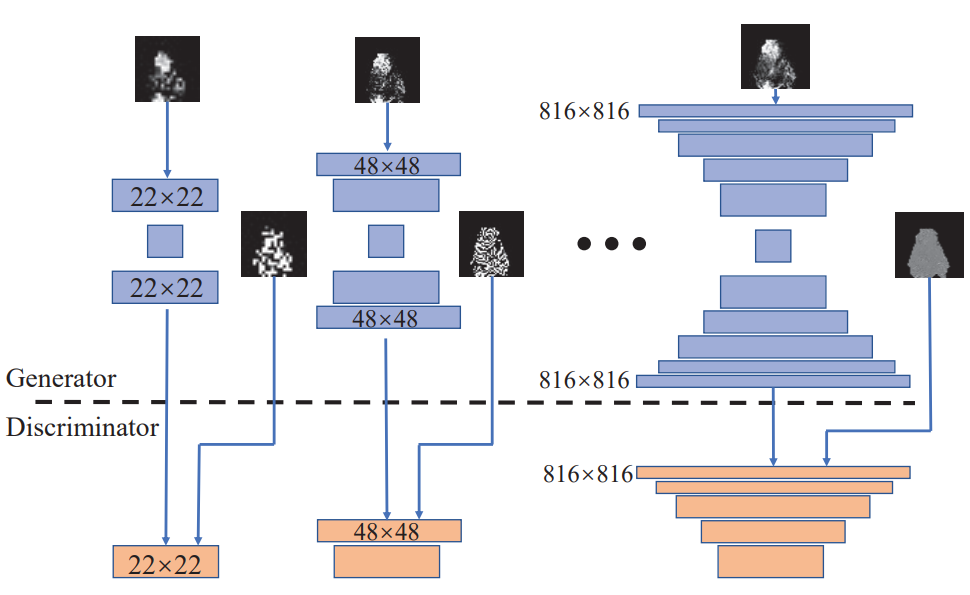

Xijie Huang, Peng Qian, Manhua Liu CVPRW 2020 Paper A latent fingerprint enhancement method based on the progressive generative adversarial network (GAN). |

|

Yong-Lu Li, Liang Xu, Xinpeng Liu, Xijie Huang, Ze Ma, Hao-Shu Fang, Cewu Lu Preprint Paper/ Project webpage A large-scale Human Activity Knowledge Engine (HAKE) based on the human body part states to promote the activity understanding. |

|

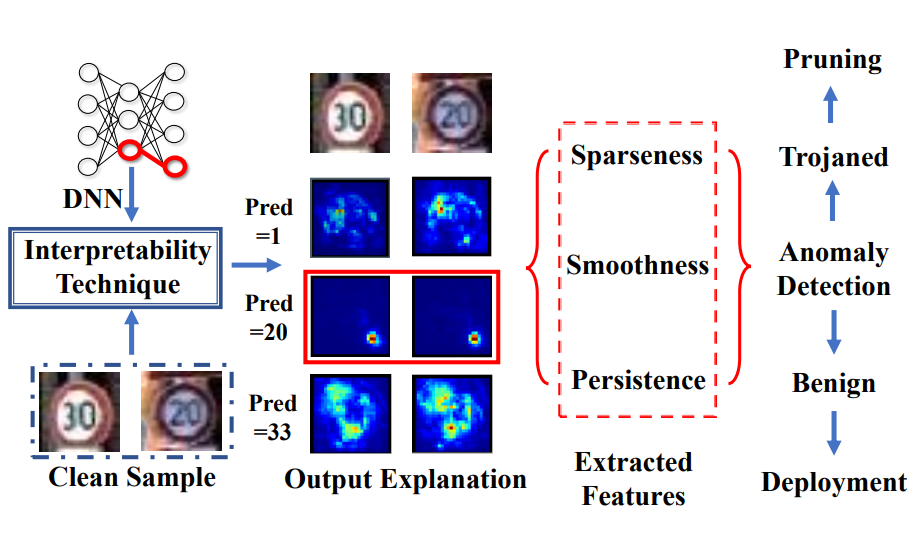

Xijie Huang, Moustafa Alzantot, Mani.Srivastava Preprint Paper A framework to detect trojan backdoors in DNNs via output explanation techniques |

|

|

|

Microsoft Research Asia

May 2023 - Feb 2024 Research Intern in System Research Group (SRG) Mentor: Li Lyna Zhang |

|

Snap Research

July 2024 - Dec 2024 Research Intern in Creative Vision group Mentor: Jian Ren, Anil Kag, and Yanwu Xu |

|

Meta Reality Lab

Oct 2025 - Present Research Engineer at Meta Reality Lab, Codec Avatar |

|

|

|

Reviewer, ICML, ICLR, NeurIPS, EMNLP, ARR (ACL, EMNLP, NAACL, etc.), ICCV, CVPR, ECCV, ACM MM, WACV, AAAI, TNNLS, TMLR

Top 10% Reviewer, ICML 2022 Program Committee, ICCV 2023 Workshop on Low-Bit Quantized Neural Networks Technical Program Committee, ICCV 2023 Workshop on Resource Efficient Deep Learning for Computer Vision |

|

COMP 2211 (Exploring Artificial Intelligence), Lecture: Professor Desmond Tsoi

COMP 5421 (Computer Vision), Lecture: Professor Dan Xu COMP 1021 (Introduction to Computer Science), Lecturer: Professor David Rossitor |

|

National Scholarship (Top 2% students in SJTU), 2017

A Class Scholarship (Top 2% students in SJTU), 2017 RongChang Academic Scholarship (Top 20 in SJTU), 2019 RedBird Scholarship, Postgraduate Studentship, HKUST, 2020-2024 AAAI-22 Student Scholarship, 2022 Stars of Tomorrow, Microsoft Research Asia, 2023 EMNLP D&I Award, 2024 |

|

Thanks to Barron's website template . |